You’ve seen the AI Twitter timeline. Engineers shipping production code in twenty minutes. Founders rebuilding their stack over a weekend with Cursor. Comments like “I haven’t typed code in three months.”

I’ve spent the last few months hiring engineers for an AI-heavy product. The reality of what I find when I interview is nothing like that timeline.

Most engineers I talk to are using AI the way I was using it in early 2024. Many haven’t built a single agent skill or workflow they reuse. Some haven’t tried Claude Code yet. The senior engineers, the ones I most want to hire on every other dimension, are often the ones using AI the least.

That last part surprised me until I understood why. This post is about what I learned, and how I now interview for it.

Why this matters

If you are a CTO, hiring manager, or team lead, you are going to interview a lot of engineers who say “I use AI every day.” That sentence has become the “I’m a fast learner” of 2026. It tells you nothing.

The difference between an engineer who asks ChatGPT to suggest a regex and an engineer who has built a workflow that ships a feature end-to-end is enormous. In dollars per quarter, it is six figures. In team output, it is the difference between holding the line and moving the line.

If you cannot tell those two apart in an interview, you are flying blind. You need a rubric.

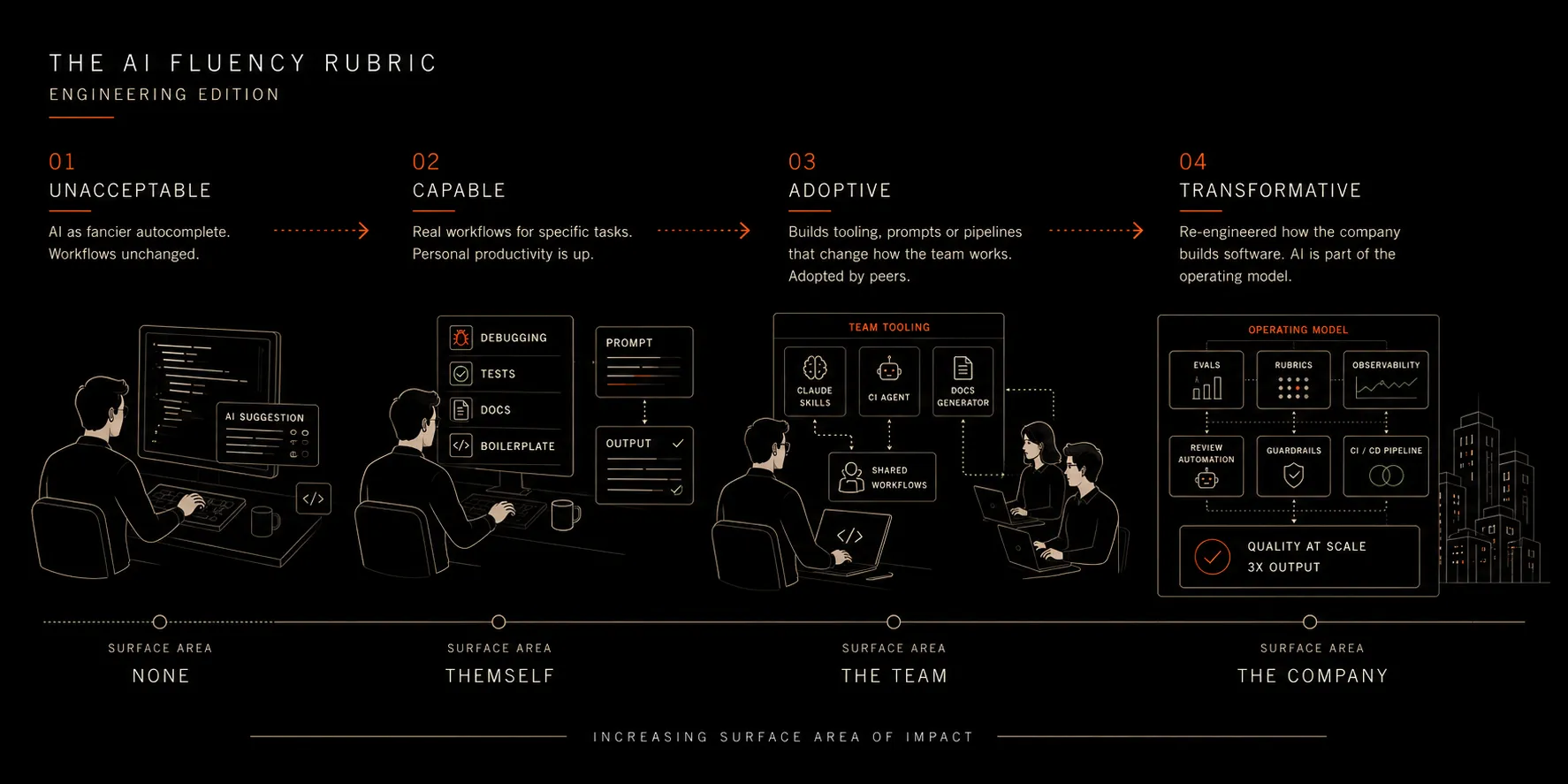

The Zapier rubric, engineering edition

Zapier published an AI Fluency Rubric in March 2026, with four levels per role. The engineering ladder is the cleanest version, so I’ll use that one.

| Level | How they use AI | Surface area | What you’d see in an interview |

|---|---|---|---|

| Unacceptable | AI as fancier autocomplete. Accepts Copilot suggestions. Asks ChatGPT to explain a stack trace. Workflows unchanged. | None. Same design, review, and test process as before. | ”Well, I use Copilot.” No examples of how the work has changed. |

| Capable | Real workflows for specific tasks: debugging, drafting tests, generating boilerplate, first drafts of docs. Describes trade-offs. | Themselves. Personal productivity is up; the team hasn’t noticed. | Names which tool for which task, why, and the failure modes they’ve learned to spot. |

| Adoptive | Has built tooling, prompts, or pipelines that change how the team works. Producer of AI workflows, not consumer. | The team. Their artifacts get adopted by peers. | Concrete examples: a custom Claude Code skill, an agent watching CI, an internal doc generator the team uses weekly. |

| Transformative | Re-engineered how the company builds software. AI is part of the operating model, not the toolbelt. | The company. Review norms, CI/CD, and release process changed because of their work. | The team is 3x with the quality bar raised. They can point at the gates, evals, and systems they designed. |

The levels are cumulative. An Adoptive engineer can do Capable things by definition. The honest test is: what’s the highest level you can defend with evidence in an interview?

Map your engineering org against this rubric. The distribution probably surprises you. Mine did.

The good engineer paradox

Here is the part nobody on AI Twitter writes about, and the part that took me a while to see clearly.

The most experienced engineers I have interviewed in the last six months are often the ones using AI the least.

Not in an Unacceptable way. Most they sit in Capable. They use Copilot or Cursor competently. They have a few prompts they reuse. But they haven’t built tools, they haven’t designed workflows, they haven’t stepped into Adoptive. And they are the ones I most want to hire on every other dimension that is not AI competence.

Why? Because the trait that made them good engineers, the instinct to read every line, to understand every dependency, to control what ships, is exactly the trait that holds them back in this transition.

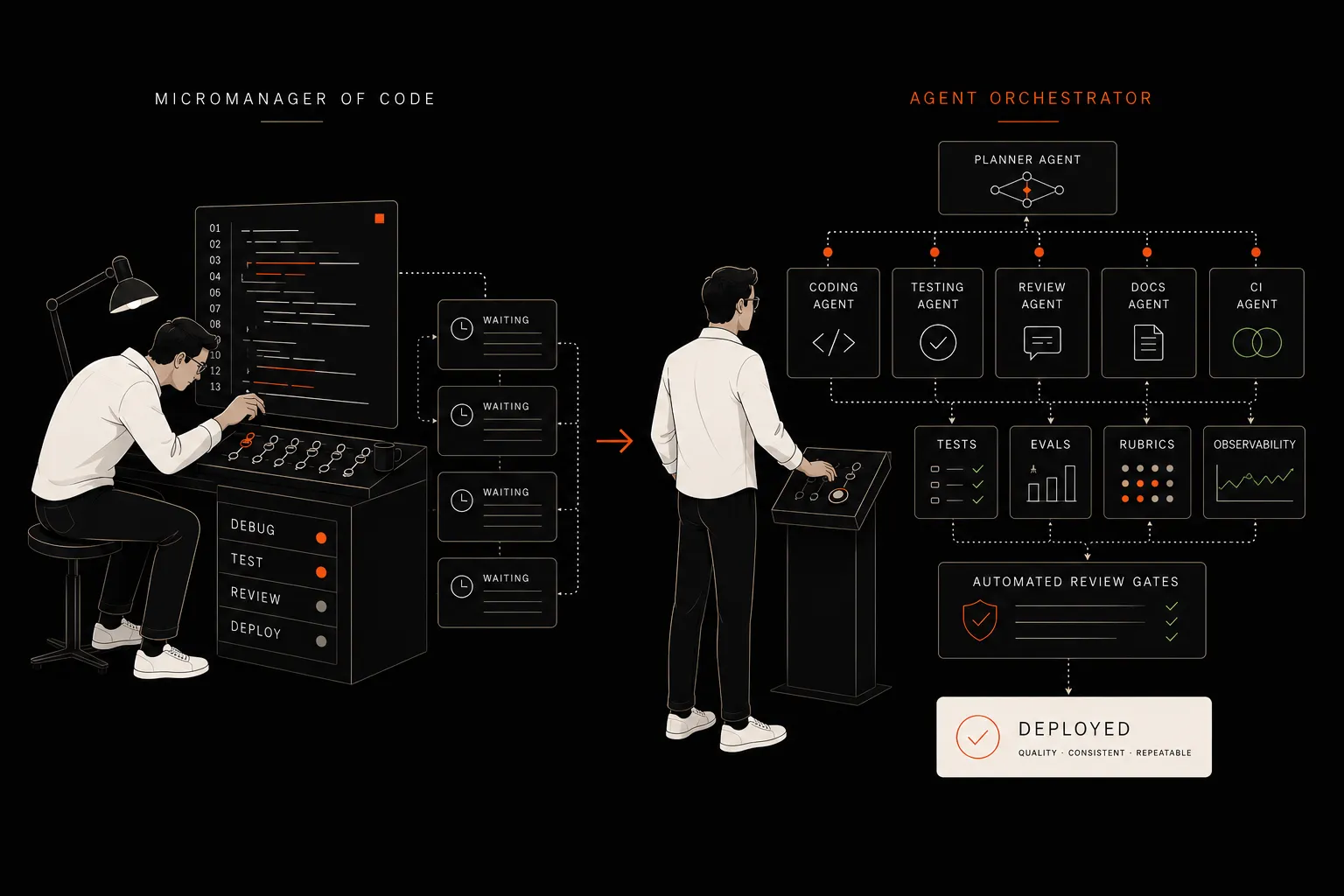

In 2024 it was a virtue to read every line of generated code. The models were unreliable and the cost of a bad commit was real. In 2026 that same habit means you are still a micromanager of code instead of an orchestrator of coding agents.

The shift from Capable to Adoptive is not technical. It is psychological. It is letting go of the part of the job that used to be the whole job.

The engineers who get to Adoptive faster are not the most senior. They are the ones who internalised that quality is now enforced through tests, evals, rubrics, observability, and review systems, not through reading every diff. That mental flip is harder than learning any tool.

From micromanager to orchestrator

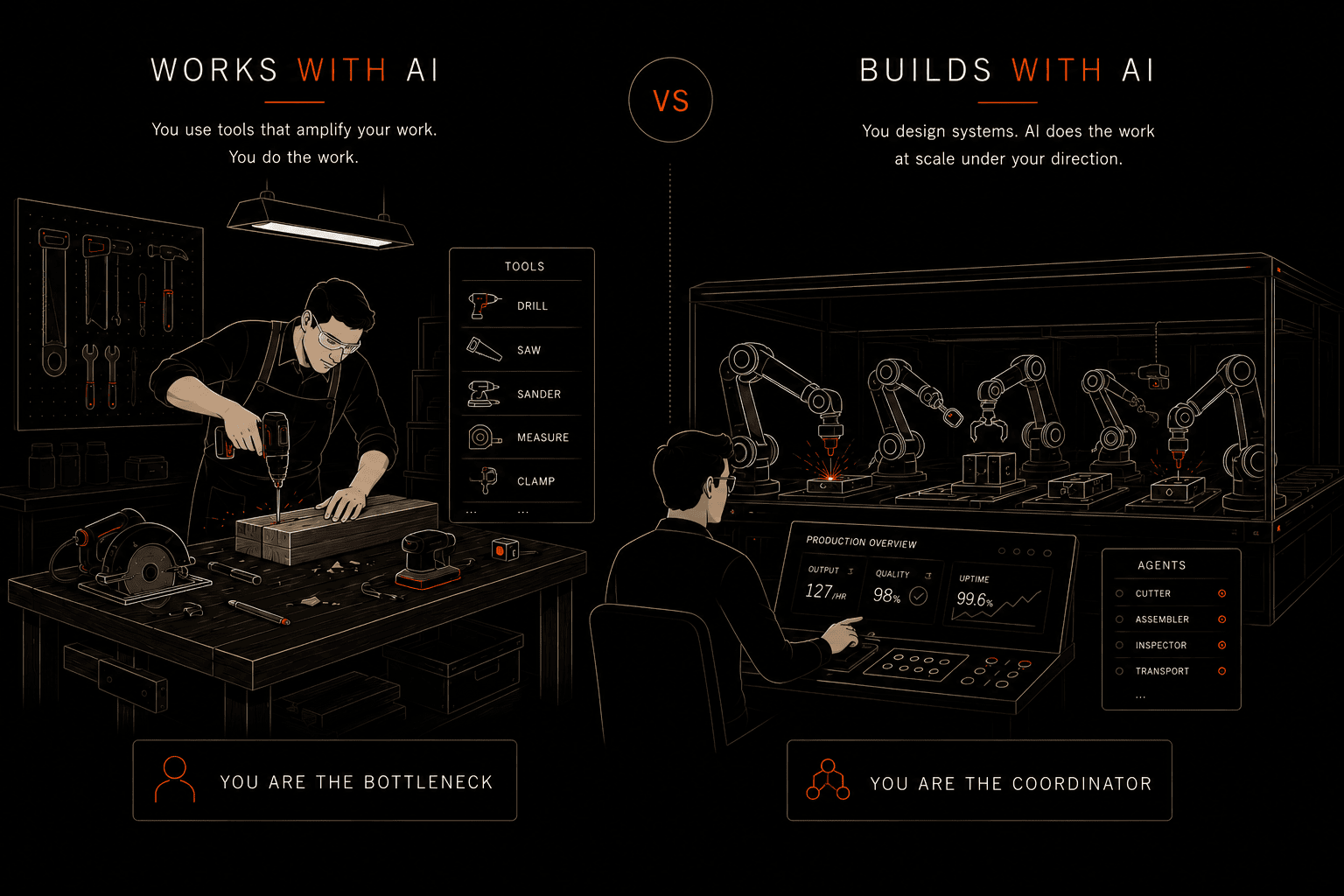

The frame I use in interviews now: are you still trying to control the code, or have you started orchestrating the system that produces the code?

A micromanager of code reads every line, accepts or rejects each suggestion, runs the show by being the bottleneck. This was correct in 2024.

An orchestrator doesn’t read every line. They build the guardrails, the tests, the evals, the rubrics, the review automation that catches the things they used to catch by reading. They trust the system they built more than their own line-by-line review. Quality goes up, not down, because the system is consistent and they are not.

You can’t fake this in an interview. The orchestrators have stories. The micromanagers have opinions.

Five interview questions that work

I’ve refined these over the last few months. Each one separates Capable from Adoptive from Transformative.

1. “Walk me through a task you finished this week using AI. What did you write yourself, what did you accept from the model, and how did you decide?”

Capable: describe the task and the prompt. Adoptive: describe the validation step, the review step, what they kept manual and why. Transformative: describe the tooling that automated the validation.

2. “Tell me about a workflow you’ve built around an AI tool that you reuse every week.”

Unacceptable: “well, I use Copilot.” Capable: a prompt template they reuse. Adoptive: a tool or script or skill they built. Transformative: something other engineers on their team now use.

3. “When did you last reject a suggestion from the model that you initially thought was correct? What was the signal that made you reject it?”

This is the calibration question.

Capable: have rejected suggestions but can’t articulate the signal. Adoptive: describe specific patterns. Missing edge case in a test, wrong library version, a side effect they spotted by running it. Transformative: describe the eval or guard they’ve written that catches that signal automatically.

4. “Show me a prompt, a skill, or a piece of tooling you’ve shared with your team that changed how they work.”

This is the Adoptive line. If they can’t answer, they are Capable at best. If they can, follow up on adoption: did people use it once, or did it become part of the team’s flow?

5. “If I gave you two weeks with zero shipping pressure, what AI tooling would you build for your team and why?”

This is the Transformative signal.

Capable: would do better testing or more documentation. Adoptive: describe a tool. Transformative: describe a system that changes how the team works.

None of these are trick questions. They are stories the candidate either has or does not.

Applying this to your team

Before you use this on candidates, run it on yourself and on your team.

Map every engineer against the four levels. Be honest. Most teams I’ve seen have a fat Capable middle, a small Adoptive group, and one or two engineers at Transformative. The thing that probably looked like Unacceptable eighteen months ago is now extinct, replaced by people who use AI badly but constantly.

The growth path that matters most is Capable to Adoptive. The leap from Adoptive to Transformative requires deliberate investment in systems, and not every engineer needs to make it. But every engineer who stays in Capable through 2026 is shrinking, in real terms, every quarter.

If you are a CTO, the highest-value hire right now is not the senior engineer who has been doing it for fifteen years. It is the engineer who has spent the last twelve months building tooling that the rest of their team now relies on.

Closing

The bar moves every six months. Adoptive today will be Capable in eighteen months. Transformative today will be the new Adoptive. The labels are temporary.

What stays is the structure. Engineers either build workflows that compound, or they perform the same work faster with AI on top. Those two paths look identical in a one-line résumé and very different in production.

If you are hiring for AI fluency, or trying to figure out where your team sits, I’m happy to compare notes. We’ve made every mistake on this rubric at FITIZENS over the last year. If something here resonates, email me.